News Release

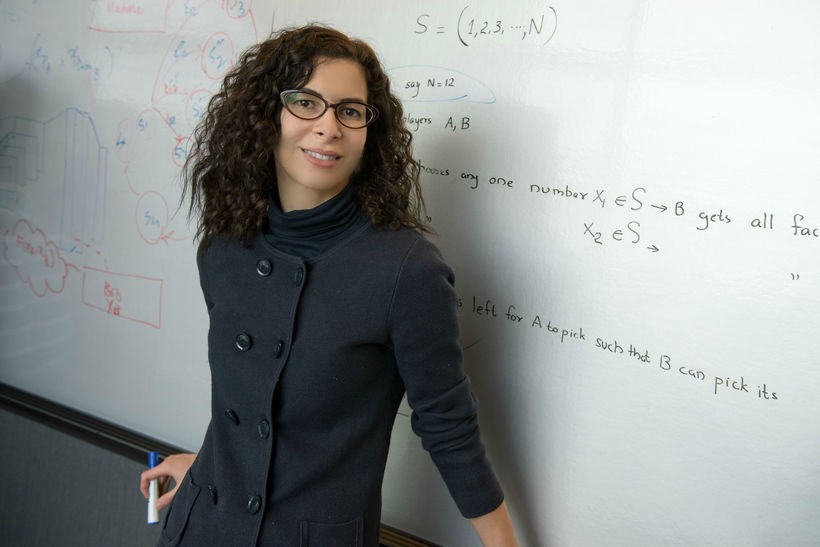

Electrical engineer selected to lead Intel AI project

Farinaz Koushanfar, a professor of electrical and computer engineering at the UC San Diego Jacobs School of Engineering, has been selected by Intel to lead one of nine inaugural research projects for the Private AI Collaborative Research Institute. The Institute, a collaboration between Intel, Avast and Borsetta, aims to advance and develop technologies in privacy and trust for decentralized artificial intelligence (AI). The companies issued a call for research proposals earlier this year and selected the first nine research projects to be supported by the institute at eight universities worldwide.

Farinaz Koushanfar, a professor of electrical and computer engineering at the UC San Diego Jacobs School of Engineering, has been selected by Intel to lead one of nine inaugural research projects for the Private AI Collaborative Research Institute. The Institute, a collaboration between Intel, Avast and Borsetta, aims to advance and develop technologies in privacy and trust for decentralized artificial intelligence (AI). The companies issued a call for research proposals earlier this year and selected the first nine research projects to be supported by the institute at eight universities worldwide.

At UC San Diego, Koushanfar, who is co-director of the Center for Machine Integrated Computing and Security, will lead a project on Private Decentralized Analytics on the Edge (PriDEdge). Her team will focus on easing the computational burden of training and data management in federated machine learning (FL) by evaluating the cryptographic primitives and devising new hardware-based primitives that complement existing resources on Intel processors. The new primitives include accelerators for homomorphic encryption, Yao's garbled circuit, and Shamir's secret sharing. Placing several cryptographic primitives on the same chip will ensure optimal usage by enabling resource sharing among these primitives. The UC San Diego team also plans to design efficient systems through the co-optimization of the FL algorithms, defense mechanisms, cryptographic primitives, and the hardware primitives.

The Private AI Collaborative Research Institute will focus its efforts on overcoming five main obstacles of the current centralized approach:

· Training data is decentralized in isolated silos and often inaccessible. Most data stored at the edge is privacy-sensitive and cannot be moved to the cloud.

· Today’s solutions are insecure and require a single trusted data center. Centralized training can be easily attacked by modifying data anywhere between collection and cloud. There is no framework for decentralized secure training among potentially untrusting participants.

· Centralized models become obsolete quickly. Infrequent batch cycles of collection, training, and deployment lead to outdated models, making continuous and differential retraining not possible.

· Centralized compute resources are costly and throttled by communication and latency. It requires vast data storage and compute as well as dedicated accelerators to make training viable.

· Federated machine learning (FL) is limited. While FL can access data at the edge, it cannot reliably guarantee privacy and security.

Learn more about the Private AI Collaborative Research Institute and the inaugural university awardees.

Media Contacts

Liezel Labios

Jacobs School of Engineering

858-246-1124

llabios@ucsd.edu